Aren’t these sizes a marketing gimmick anyway? They used to mean the gate size of a transistor, but I don’t think that’s been the case for a few years now.

They’re generally consistent within a single manufacturer’s product lines; however, you absolutely cannot compare them between manufacturers because the definitions are completely different.

And that’s what benchmarks are for

No, it still means what it always has, and each step still introduces good gains.

It’s just that each step is getting smaller and MUCH more difficult and we still aren’t entirely sure what to do after we get to 1. In the past we were able to go from 65nm in 2006 to 45 in 2008. We had 7nm in 2020, but in that same 2 year time frame we are only able to get to 5nm

And now we’ve reached the need for decimal steps with this 1.6.

Later each new generation process became known as a technology node[17] or process node,[18][19] designated by the process’ minimum feature size in nanometers (or historically micrometers) of the process’s transistor gate length, such as the “90 nm process”. However, this has not been the case since 1994,[20] and the number of nanometers used to name process nodes (see the International Technology Roadmap for Semiconductors) has become more of a marketing term that has no standardized relation with functional feature sizes or with transistor density (number of transistors per unit area).[21]

https://en.wikipedia.org/wiki/Semiconductor_device_fabrication#Feature_size

personally, I don’t care they try to simplify these extremely complicated chip layouts, but keep calling it X nanometers when there’s nothing of that feature size is just plain misleading.

yes, boss, I have always worked 42 hours per week… Huh? No, what do you mean “actual hours”?

Hahaha, I love that!

it doesnt mean what it traditionally mean since findett due yo the idea that finfett involves a folding process where its not necessarily a transistor in a traditional sense.

its the main reason what intel was conplaining about when it decided to rename its processes to be in lone with tsmc/samsung. Intel’s 10nm process is actually a more dense pack of transitors than both TSMC’s 7nm and Samsung’s 8nm. so you have to make a stance, either TSMC/Samsung is over representing the definition, or Intel is underrepresenting it. because of it, either of the two actions need to happen:

TSMC/Samsung need to increase the number of their process because its illogical that a competitor has a more dense node with a higher number.

or

Intel renames their process with a lower number to better match its density when compared to TSMC/Samsung. Because Intel as a company only has the power to do this, this is what they did, and were underfire for it.

regardless, the nm stated in the nm does not represent what it used to traditionally mean, as whatever stance you have, some company is lying about their numbers.

No doubt there’s lying and marketing spin going on, but these nm numbers aren’t just all fluff. They’re kinda like how hard drive manufacturers market drives. They’ll say its “2TB” or something but in reality it offers only 1.8TB of usable space. It’s similar with nm sizes; a 7nm from TSMC might stretch the truth a bit, but it’s still somewhat grounded in real specs, not wildly off.

the hard drive one is more the concept of measuring things in base 10 or base 2, its caused by the rounding of 1000(10^3) vs 1024(2^10), hence where theres a difference between Gibibytes and Gigabytes.

finfetts decision was basically, its physically supposed to be one number, but folding it offers a performance increase (but not exactly equal to doubling transistors) so be picked an arbitrary lower number to represent ts peeformance. the problem is because its arbitrary, TSMC/Samsung gets all the power to fudge the numbers up.

if intels 10nm was more dense than TSMC 7, it shouldnt have been called TSMC 7, it should have been closer to TSMC 10 or 11.

12/16nm is when finfett technology was used, and the start of where the numbers started to get fudged.

It refers to feature size, rather than component.

So they found a way to inscribe more arcane runes onto the mystic rock thus increasing its mana capacity?

That’s pretty much it yeah

Can’t wait for Python on top of webassembly on top of react on top of electron Frameworks to void that advancement

The more efficient the machines, the less efficient the code, such is the way od life

I’m wondering how much further size reductions in lithography technology can take us before we need to find new exotic materials or radically different CPU concepts.

We’ve already been doing radically different design concepts, chiplets being a massive one that jumps to mind.

But also things like specialised hardware accelerators, 3D stacking, or the upcoming backside power delivery tech.

I mean, chiplets are neat…but they are just the same old CPU, just in lego. and thats mostly just to increase yields vs monolothic designs. Same with accelerators, stacking, etc.

I mean radical new alien designs (Like quantum CPUs as an example) since we have to be reaching the limit of what silicon and lithography can do.

Photonic is the game changer. Putting little LEDs on chips and making those terabyte per second interconnects with low heat and long range and better signal integrity

This is what I’m talking about. This kind of weird, new shit, to overcome the limits we have to be running into by now with the standard silicon and lithography that we’ve been using and evolving for 40 years.

I don’t think it is mostly just the same CPU with a slight twist. It’d be mind-blowing tech if you showed it to some electrical engineers from 20, 15, shit even 10 years ago. Chiplets were and are a big deal, and have plenty of advantages beyond yield improvements.

I also disagree with stacking not being a crazy advancement. Stacking is big, especially for memory and cache, which most chip designs are starved of (and will get worse as they don’t shrink as well)

There’s more to new, radical, chip design than switching what material they use. Chiplets were a radical change. I think you’re only not classifying them as an “alien” design as you’re now used to them. If carbon nanotube monolithic CPU designs came out a while ago, I think you’d have similarly gotten used to them and think of them as the new normal and not something entirely different.

Splitting up silicon into individual modules and being able to trivially swap out chiplets seems more alien to me than if they simply moved from silicon transistors to [material] transistors.

Well being as the diameter of a silicon atom is 0.2 nm I’m guessing not long

What’s the threshold for quantum tunneling to be an issue? Cuz at such small scales, particles can…teleport through stuff.

It’s important to note that 1.6nm is just a marketing naming scheme and has nothing to do with the actual size of the transistors.

https://en.m.wikipedia.org/wiki/3_nm_process

Look at the 3nm process and how the gate pitch is 48nm and the metal pitch is 24nm. The names of the processes stopped having to do with the size of the transistors over a decade ago. It is stupid.

That is ridiculous. Does 3nm mean anything or it is purely marketing?

Every process name is basically a marketing a gimmick. You could sort of use the previous names as a ratio to get a general idea of uplift but that isn’t really accurate either. To further confuse things Intel’s naming scheme had bigger nm values but is a similar size to lower numbered names from TSMC.

Quantum tunneling has been an ongoing issue. Basically, they are using different design of transistors, different semiconductor material at the gate, and accepting that it is a reality and adding more error checks/corrections.

I think I’m just skipping the whole 20,30,40 series as well as the 7nm series…my 9900k and 1080ti are doing me just fine. Maybe 2026 I’ll consider upgrading.

This is the best summary I could come up with:

TSMC disclosed that A16 will combine its nanosheet transistor design, set to be introduced on 2nm, with Super Power Rail technology.

According to Reuters, TSMC indicated that it does not need ASML’s latest High NA EUV photolithography machines in order to produce chips with its A16 process.

This adds area-efficient design rules that are compatible with its popular N4P process, but which will deliver an 8.5 percent die cost reduction for “value-tier” products, TSMC claims.

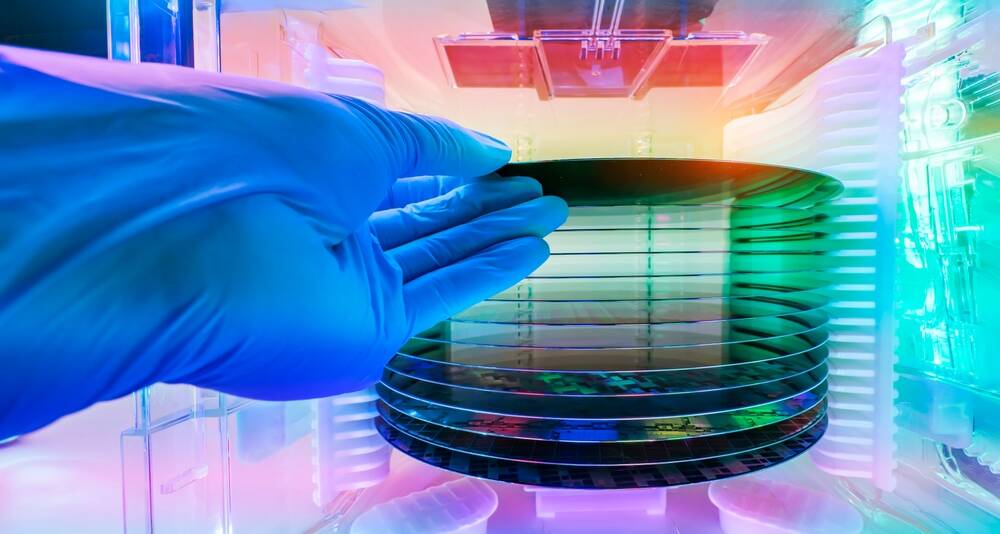

This enables a large array of dies on a 300 mm wafer to form a single system, boosting compute power while occupying far less space.

TSMC also said it is developing Compact Universal Photonic Engine (COUPE) technology for high-speed interconnects, citing AI as an application that will need this.

TSMC reported revenue up year-on-year for the first quarter of 2024 earlier this month, beating expectations, and said it anticipated that demand for AI-capable PCs and datacenter kit will drive higher sales of the silicon it produces this year.

The original article contains 686 words, the summary contains 163 words. Saved 76%. I’m a bot and I’m open source!