I use a 13 year old PC because a newer one will be infected with Windows 11. (The company refuses to migrate to Linux because some of the software they use isn’t compatible.)

And I’m saying that I could have been that developer if I were twenty years younger.

They’re not bad developers, they just haven’t yet been hurt enough to develop protective mechanisms against scams like these.

They are not the problem. The scammers selling the LLM’s as something they’re not are.

I was lucky enough to not have access to LLMs when I was learning to code.

Plus, over the years I’ve developed a good thick protective shell (or callus) of cynicism, spite, distrust, and absolute seething hatred towards anything involving computers, which younger developers yet lack.

No. LLMs are very good at scamming people into believing they’re giving correct answers. It’s practically the only thing they’re any good at.

Don’t blame the victims, blame the scammers selling LLMs as anything other than fancy but useless toys.

Having to deal with pull requests defecated by “developers” who blindly copy code from chatgpt is a particularly annoying and depressing waste of time.

At least back when they blindly copied code from stack overflow they had to read through the answers and comments and try to figure out which one fit their use case better and why, and maybe learn something… now they just assume the LLM is right (despite the fact that they asked the wrong question and even if they had asked the right one it’d’ve given the wrong answer) and call it a day; no brain activity or learning whatsoever.

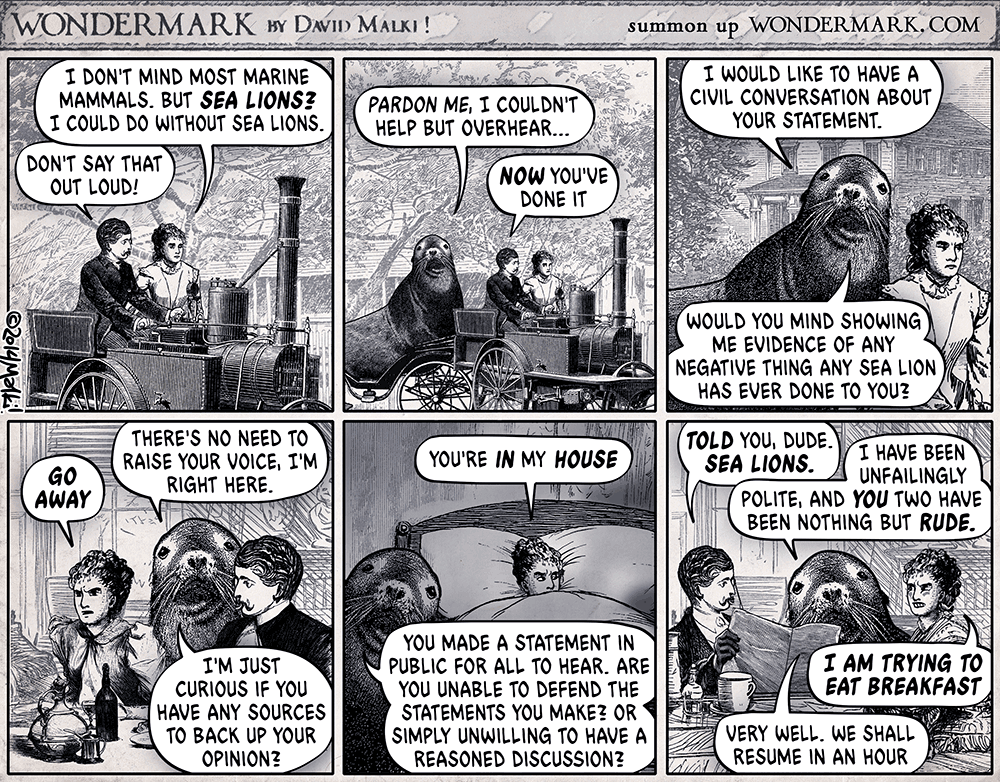

Source: https://wondermark.com/c/1062/

Wikipedia article: https://en.wikipedia.org/wiki/Sealioning

Better resolution:

If it’s alive and plucked, it’s just regular sex.

— Plato

Lemon ice cream is great, though.

Also, apples, acidic…? I hate all apples except Granny Smiths because they taste sweet and cloying as fuck… Granny Smiths are the only ones I’ve ever found with a nice acidic taste…

every human alive is guilty

We are, though, if we’re not actively doing our best to stop all this. We’re all at least necessary accomplices.

You’re a necessary accomplice. Organise. Burn shit up and build better things on top of the ashes.

I’m both, and while I do hate myself, I don’t think it’s related, so I’m not sure I get it.

(I hate computers more, though, except when they’re turned off — no bugs when they’re off —, but they’re the only thing I’m good enough at to make a living off of.)

The post specifically mentions blue eyes and blond hair, both of which are recessive, if I’m not mistaken.

This makes me think it might have been written by someone who knew what recessive traits are and was just mocking the particular brand of racist that would want to perform “an epic bleaching”…

makes it sound like they’re all equal, and there hasn’t been any progression

Programming peaked with Lisp (and SQL for database stuff).

Every “progression” made since Lisp has been other languages adding features to (partially but not quite completely) do stuff that could already be done in Lisp, but with less well implemented (though probably with probably less parentheses).

They are all flawed and they all encourage some bad design patterns.

On the other hand, Lisp.

What’s worse is that half the coordinates probably ended up as dates…

Are search engines worse than they used to be?

Definitely.

Am I still successfully using them several times a day to learn how to do what I want to do (and to help colleagues who use LLMs instead of search engines learn how to do what they want to do once they get frustrated enough to start swearing loudly enough for me to hear them)?

Also yes. And it’s not taking significantly longer than it did when they were less enshittified.

Are LLMs a viable alternative to search engines, even as enshittified as they are today?

Fuck, no. They’re slower, they’re harder and more cumbersome to use, their results are useless on a good day and harmful on most, and they give you no context or sources to learn from, so best case scenario you get a suboptimal partial buggy solution to your problem which you can’t learn anything useful from (even worse, if you learn it as the correct solution you’ll never learn why it’s suboptimal or, more probably, downright harmful).

If search engines ever get enshittified to the point of being truly useless, the alternative aren’t LLMs. The alternative is to grab a fucking book (after making sure it wasn’t defecated by an LLM), like we did before search engines were a thing.